With the recently announced learning division of BRCK Inc led by Nivi Mukherjee, comes this exciting news about a major agreement signed between BRCK Inc and the venerable Kenyatta University.

Do see the announcement on the Kenyatta University website too.

Kenyatta University, BRCK sign digital literacy MoU

Nairobi, 7 August 2015 –Kenyatta University, a leading Kenyan academic institution and BRCK, a local technology firm based in Nairobi, have today signed a Memorandum of Understanding (MoU) to promote digital literacy in the country.

The MoU covers among other areas, joint collaboration in the design and development of innovative technological solutions, research, advocacy and stakeholder engagements, content development and training and capacity building.

Speaking after the signing ceremony, KU Vice Chancellor Prof. Olive Mugenda said the partnership will enable the two organisations to leverage technology as an enabler of delivering educational content and impart knowledge, skills, attitudes and values that will have far-reaching changes in the education sector.

“It is a great pleasure to partner with like-minded organisations such as BRCK with whom we have a shared vision and an appreciation of the role that technology can and should play in the overall education process. Both BRCK and KU are at the forefront of developing and rolling out cutting edge digital solutions that will transform the way education is delivered resulting in an enhanced learning environment that offers competitive advantage in the workforce for the young people of Africa and generations to come,” she said.

The partnership will also firmly establish Kenya as a global hub for digital education, by creating a unique centre where deployments of digital learning solutions, teacher training, curriculum design and device design, manufacturing and logistics knowledge gained will come together leading to a 21st century digital education centre.

The two organisations will leverage each other’s strengths to ensure a successful joint venture delivering uncompromised standards and techniques in teaching and learning. Kenyatta University has a dedicated division of research and a very vibrate innovation and incubation centre which has seen the creation of winning start -up companies in the country. The institution also has a very strong digital school which was re-engineered recently.

On the other hand, BRCK has an unrivalled ability and capability to develop innovative connectivity technologies making the two partners a formidable, dynamic, forward-thinking team.

As part of the agreement, Kenyatta University will host the manufacturing and assembly plant which will enable the institution to manufacture more tablets. This will result in the creation of jobs for the youth, technology transfer and will also mitigate depreciation of the exchange rate through local production rather than importing the devices.

“We were the first university in the country to use tablets to deliver content to the students in the digital school. The tablets are very interactive with software that makes teaching easy and interactive. Following our partnership with BRCK, we hope to start a manufacturing plant to make cost effective tablets as well as scale up their use to other schools,” Prof. Mugenda added.

This partnership will create the infrastructure and skills not only for the digital learning but design, build and manufacture a myriad of technologies in future creating indigenous digital solutions for a myriad of applications in education and beyond that will be deployed around the continent.

BRCK Board member Juliana Rotich said: “Education has been identified as the common thread that will shape a sustainable future for economies around the globe and digital technology is one of the tools that will provide a leg-up to this new mode of learning. Through this joint venture, we aim to overcome barriers in the sector and offer constructive value through content, connectivity and functionality.”

About Kenyatta University

Kenyatta University is an international university based in Nairobi Kenya. It was established in 1985 through an Act of Parliament. The University’s main campus is located along the Thika Superhighway, 20 kilometers from Nairobi City center with 10 Satellite campuses and Distance and elearning Centers each. It offers over 500 programmes across 17 schools.

By April 2015, the student population was over 70,000 with a staff capacity of over 3,000, both teaching and non-teaching. Its infrastructural set up among other key ranking points has placed the University as the best in the country and regionally.

Kenyatta University has a cutting edge over other universities due to their emphasis on practical hands-on knowledge and the skills training imparted to both students and the larger international community.

Kenyatta University is home to some of the world’s top scholars, researchers and experts in diverse fields. The institution prides itself in providing high quality programmes that attract individuals who wish to be globally competitive. Towards this end, the University has invested heavily in infrastructure and facilities to offer its students the best experience in quality academic programmes under a nurturing environment in which its students learn and grow.

The university has partnered with other key organizations for example Young African Leadership Initiative (YALI) which is supported by the U.S. Government and the African Centre for Transformative and Inclusive Leadership (ACTIL), which is sponsored by the UN WOMEN.

About BRCK

BRCK is a hardware and services tech company based in Nairobi, Kenya. As the first company to pursue ground up design and engineering of consumer electronics in East Africa, it has developed a connectivity device also known as BRCK, which is designed to work in harsh environment where electricity is intermittent. BRCK can support up to 40 devices, has an 8-hour battery life when the power is out, and can jump from Ethernet, to WiFi, to 3G, to 4G seamlessly. The initial BRCK units started shipping in July of 2014 and by February of 2015, thousands of BRCK’s had been sold to 54 countries around the world.

BRCK is a spin-off from the world acclaimed Ushahidi, a Kenyan technology company which builds open source software tools and which has received accolades for the impact that its creative and cutting-edge solutions are having around the world.

Media contacts

Evelyn Njoroge, africapractice [email protected] 0721704712

Sally Kahiu, africapractice [email protected] 0706322488

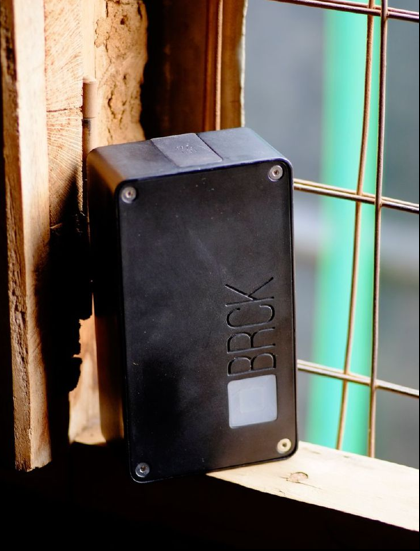

Preparing a PicoBRCK for deployment.

Preparing a PicoBRCK for deployment.

Troubleshooting network connectivity issues.

Troubleshooting network connectivity issues. Typical power outlets.

Typical power outlets.